8. World Models (w/ Anil Bawa-Cavia)

Sign up for free

Listen to this episode and many more. Enjoy the best podcasts on Spreaker!

Download and listen anywhere

Download your favorite episodes and enjoy them, wherever you are! Sign up or log in now to access offline listening.

Description

Anil Bawa-Cavia (AA Cavia) is one of our favorite writers and practitioners on the philosophy of computation. We discovered his work through Logiciel, on &&& (we <3 &&&!), both a...

show moreThis conversation is a really nice parallel to Anil’s amazing chapter in Choreomata, in which he identifies the bottlenecks we are rapidly approaching through deep learning as, in part, products of incomplete thinking as to the nature of language, learning, their messy and entangled relationship to the “world,” and their reconsumptive throughput as it assembles into what we increasingly understand as something like intelligence.

We want this conversation to be accessible to as many listeners as possible, so here are some further references and definitions that might be useful:

- I’ll be honest, I was surprised when I learned how radically different (and how totally gendered) the “Turing Test” was in its original formulation from what it’s become known to be. Read about it directly via: Turing - Can Machines Think (https://redirect.cs.umbc.edu/courses/471/papers/turing.pdf).

- It’s likely the distinction between supervised and unsupervised learning is very clear to most listeners, but if you’re unfamiliar with this distinction, see a sufficient overview here (https://www.ibm.com/blog/supervised-vs-unsupervised-learning/). This becomes important as Anil starts speaks to the implications of things like pedagogy and normativity to learning.

- The concept of normativity is used quite a bit here in a way that might be unfamiliar to some people. Think of normativity as the moment the word should enters into some construct — both in the prescriptive sense (“you should behave according to xyz social norms”) but also to some extent in the empirical sense (“based on what I’ve observed so far, this type of outcome should result from this interaction”). While we encode norms into language models (both through supervised learning, but also through the hidden organizing principles that are contained within complex structures like language), we do not encode “normativity” — a way of engaging with norms as norms. This is a good place to start when trying to understand the critique from inferentialism that Anil brings from Wilfred Sellars and Robert Brandom.

- An “embedding” is essentially the ability to place some system or configuration within another system in such a way that its general shape is retained. In the context of machine learning, language is embedded into a high-dimensional numerical space wherein meaning can be identified by the proximity of various words within that space, and translations between languages can be accomplished by looking at the position of words within one language’s embedding and correlating that to a similar set of positions in another. You don’t need to understand topology to intuit what this might look like in a way that is sufficiently useful. Anil playfully refers to “embedding” in Wilfred Sellars’ work — a philosopher who argues that everything we know is ‘embedded’ within complex webs of beliefs, norms, and meanings.

- Anil references Alain Badiou’s writings on finitude, and it’s our impression that this is a reference to Badiou’s completion of his enormously sprawling Being and Event trilogy (“The Immanence of Truths”). Not an essential book for this podcast or a barrier to understanding Anil’s work, nor for the faint of heart in terms of its scope, but if you’re intrigued by “an all out attack on finitude” — go for it!

- For some more content on what the “multiple realizability” of computation looks like (how computation enjoys meaningful distinction from hardware), we love Laura Tripaldi's Parallel Minds.

- Anil references James Ladyman & Don Ross, whose work he repurposes in a critical way — see “Every Thing Must Go: Metaphysics Naturalized.”

- We love love love Anil’s interview on Interdependence (https://interdependence.fm/episodes/inhuman-intelligence-with-anil-bawa-cavia).

We love this episode! Enjoy!

Information

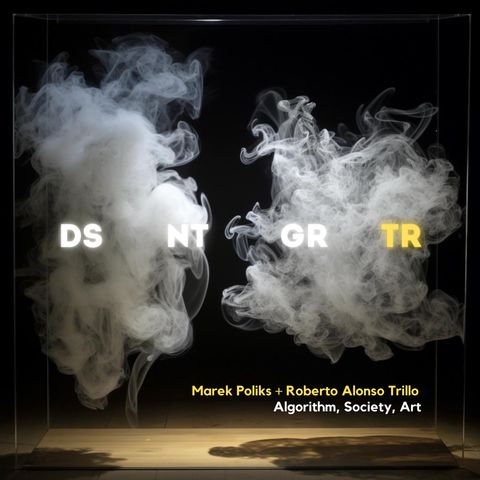

| Author | Marek Poliks, Roberto Alonso |

| Organization | Marek Poliks |

| Website | - |

| Tags |

-

|

Copyright 2024 - Spreaker Inc. an iHeartMedia Company